How AI Detects Patterns in Cyber Incidents

AI is transforming cybersecurity by moving beyond outdated signature-based methods to detect threats based on behavior. It establishes baselines for normal activity, identifies deviations, and uses machine learning to spot zero-day exploits, insider threats, and advanced attacks. Key takeaways include:

- Behavioral Analysis: AI monitors user and system behavior to detect anomalies, like unusual login times or file access.

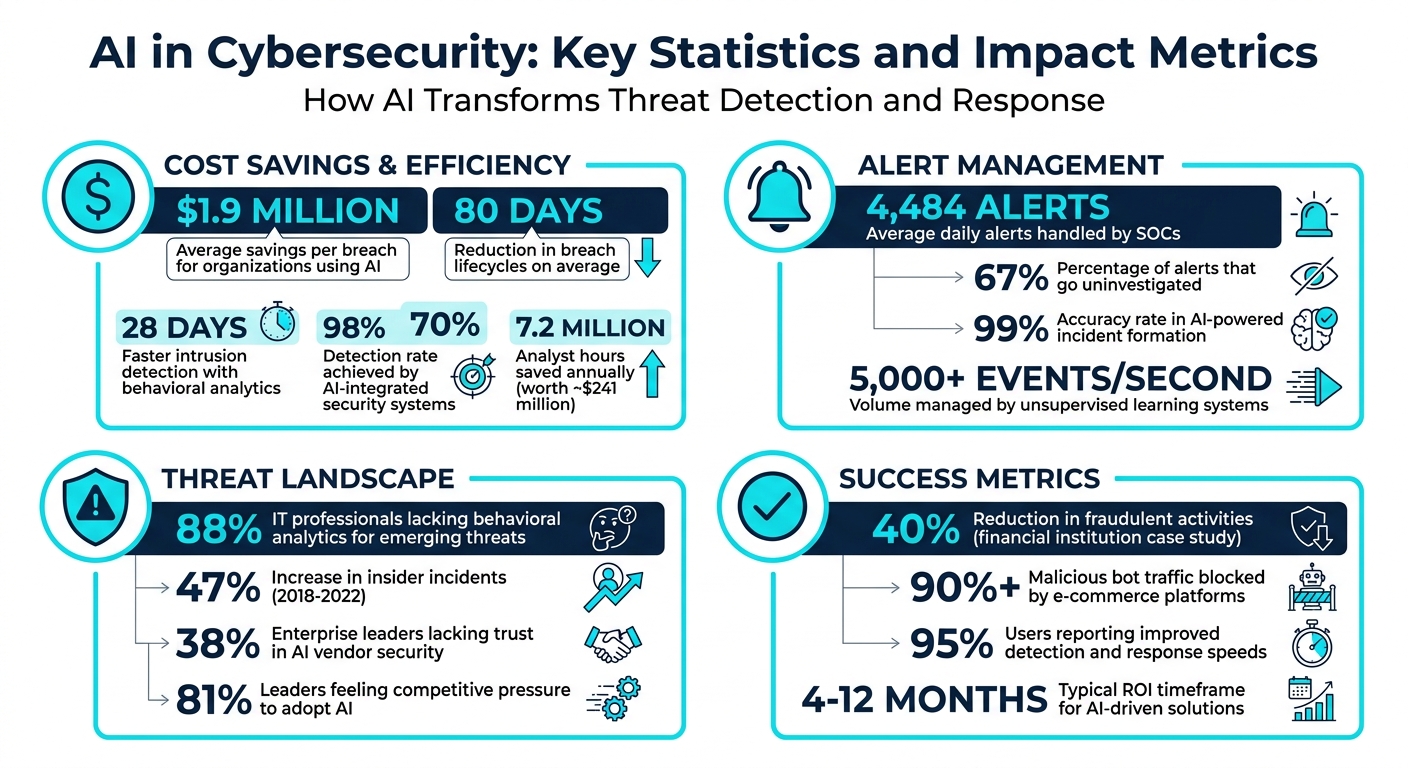

- Machine Learning: Models use historical data to identify patterns and predict threats, reducing breach lifecycles by 80 days on average.

- Anomaly Detection: Risk scores highlight suspicious activities, helping teams prioritize critical alerts.

- Unsupervised Learning: Detects unknown threats by analyzing unlabeled data, such as insider attacks or lateral movement.

- Natural Language Processing (NLP): Extracts actionable insights from unstructured sources like threat reports and open-source data.

- Predictive Analytics: Forecasts attack trends, enabling proactive defense against multi-stage attacks.

- Automated Responses: Integrates with tools like SOAR to quickly isolate threats and reduce response times.

AI bridges the gap between reactive and proactive security, helping organizations save $1.9 million per breach on average and cut down on manual workload. By combining machine learning, NLP, and predictive analytics, it provides faster, smarter threat detection and response.

AI in Cybersecurity: Key Statistics and Impact Metrics

How Generative AI Detects Cyber Attacks

sbb-itb-9b7603c

Building Behavioral Baselines with Machine Learning

Machine learning transforms security logs into dynamic behavioral profiles, moving away from static rules that often trigger false alarms. By processing logs from various sources – firewalls, SIEM platforms, cloud APIs like AWS CloudTrail, and endpoint agents – AI normalizes the data into a unified format. This consolidated perspective allows for cross-source correlation, uncovering patterns that traditional tools might miss. This foundation supports real-time anomaly detection and advanced threat analysis.

The system learns what constitutes normal behavior for users and devices across four key areas: authentication (e.g., typical login times and geographic locations), file access (frequently accessed directories and interactions with sensitive documents), data movement (patterns in upload/download volumes and destinations), and collaboration (sharing activity with internal or external partners). AI identifies deviations from these norms – for instance, a DevOps engineer logging in at 11:00 PM might be expected, but an HR employee accessing engineering files at 2:00 AM would raise a red flag.

These behavioral baselines are essential for detecting threats based on unusual activity rather than relying solely on known attack signatures.

Training AI Models with Historical Data

AI models establish these behavioral patterns, or "patterns of life", by observing routine activities over training periods that typically span 21 to 90 days. During this phase, AI employs a combination of supervised learning (using labeled data) and unsupervised methods like k-means clustering and autoencoders. This dual approach ensures the detection of both known threats and unexpected anomalies, including zero-day attacks.

"Behavioral analytics in cybersecurity uses machine learning (ML) and artificial intelligence (AI) to analyze patterns in user and entity behavior within networks, applications, and other digital environments." – Security Specialist

The need for this capability is clear: around 88% of IT professionals admit they lack the behavioral analytics necessary to detect emerging threats. However, organizations that integrate behavioral analytics and threat intelligence report a significant advantage, reducing intrusion detection time by an average of 28 days. Once trained, these models enable rapid and accurate anomaly scoring.

Anomaly Detection for Early Threat Identification

After establishing baselines, each event is assigned a risk score based on how far it deviates from expected patterns. For example, a user accessing files from an unusual location at 3:00 AM might earn a moderate risk score. If this activity is combined with large data transfers to an external cloud service, the score escalates significantly. Modern systems categorize anomalies into three types: point anomalies (isolated suspicious events), contextual anomalies (normal actions occurring in unusual contexts), and collective anomalies (sequences of events that seem benign individually but indicate malicious intent when viewed together).

Security teams are inundated with an average of 4,484 alerts daily, with about 67% going uninvestigated due to sheer volume. Behavioral baselining addresses this issue by highlighting high-priority alerts that truly warrant human attention, turning alert overload into actionable intelligence for focused threat hunting.

Unsupervised Learning for Detecting Unknown Threats

Unsupervised learning builds on behavioral baselines to tackle threats that don’t match known signatures. While traditional tools depend on identifying attack patterns already in their database, unsupervised methods excel at detecting new techniques and insider threats. Unlike supervised models that need labeled training data, unsupervised algorithms analyze unlabeled data, uncovering patterns and spotting deviations from normal activity. This makes them especially useful for identifying insider attacks, lateral movement, and zero-day exploits – threats that don’t leave a recognizable trail. By identifying these anomalies, unsupervised learning works alongside behavioral analytics to form a stronger, more complete threat detection framework.

This approach is invaluable for organizations managing over 5,000 events per second, where manual processes simply can’t keep up. Unlike supervised systems that may require 6 to 24 months of training data, unsupervised learning can establish a baseline in just a few days. This speed is critical, especially when traditional tools might misclassify unfamiliar but harmful activities as harmless.

Identifying Patterns Without Labeled Data

Unsupervised models work by analyzing the structure of data to detect anomalies. Algorithms like k-means, DBSCAN, and isolation forests group similar behaviors, making outliers stand out . For instance, if database administrators typically access customer records during business hours from the corporate network, an unsupervised model would flag unusual behavior – like a DBA accessing the same records at 2:00 AM from a remote coffee shop.

These models assign risk scores to deviations using techniques like distance-based scoring or isolation forests. In more complex scenarios, Long Short-Term Memory (LSTM) neural networks can analyze sequential data to identify sophisticated attack patterns, such as lateral movement. Insider incidents have risen sharply, increasing by 47% between 2018 and 2022.

"Unsupervised machine learning uses the very nature of the environment within which it is deployed to create the baseline upon which decisions are made." – Russell Gray, Vice President of Product Development, MixMode

However, unsupervised models often generate more false positives than rule-based systems. To address this, flagged events are enriched with threat intelligence and asset data, helping to distinguish true threats from benign anomalies. A human-in-the-loop approach ensures critical findings are reviewed by experts, with feedback helping the AI refine its baseline over time .

Real-Time Monitoring of Traffic and System Behavior

Unsupervised learning doesn’t stop at pattern detection – it also powers real-time monitoring. Logs from firewalls, cloud APIs (like AWS CloudTrail), endpoint agents, and SIEM platforms are ingested and normalized into a common format, such as CEF or syslog. This unified data allows the system to evaluate each event against the established baseline in real time.

Organizations are increasingly adopting inline NDR (Network Detection and Response) sensors that integrate directly into traffic flows. These sensors can decrypt, analyze, and respond to threats immediately, rather than merely observing them. This is particularly useful for tracking unmanaged devices, which often outnumber managed ones by a ratio of 2 to 1. As Joe Lee from Trend Micro explains:

"NDR addresses these struggles by monitoring your network traffic and device behaviors. Any activity around an unmanaged device can be detected, analyzed, and determined to be anomalous, even if the device itself is dark."

For example, an unsupervised model can identify an attacker using legitimate tools in an unusual way, such as moving laterally across the network with valid credentials to access previously untouched systems. The AI doesn’t need a predefined signature for such attacks; it simply recognizes when actions deviate from the norm. In high-volume environments, this capability reduces alert fatigue by narrowing the focus to the anomalies that matter most, enabling security teams to prioritize real threats effectively.

Natural Language Processing for Threat Intelligence

AI isn’t just about spotting unusual system behavior – it goes further by using Natural Language Processing (NLP) to turn unstructured threat data into actionable insights. While unsupervised learning shines in identifying anomalies, NLP focuses on extracting meaningful intelligence from unstructured sources like threat reports, red team writeups, logs, and open-source intelligence (OSINT). This combination strengthens the ability to detect patterns across diverse datasets.

NLP-powered platforms take raw, unstructured text and convert it into structured, machine-readable formats that security teams can act on swiftly. These systems rely on specialized Large Language Models (LLMs) to pinpoint adversary behaviors, pull out metadata like cloud infrastructure details and detection opportunities, and align findings with frameworks like MITRE ATT&CK. The process typically involves several steps: breaking documents into manageable segments, extracting Tactics, Techniques, and Procedures (TTPs) from text, normalizing behaviors using Retrieval Augmented Generation (RAG), and performing gap analysis through vector similarity searches. As the Microsoft Defender Security Research Team puts it:

"Security teams routinely need to transform unstructured threat knowledge, such as incident narratives, red team breach-path writeups, threat actor profiles, and public reports into concrete defensive action."

NLP isn’t limited to internal data – it also enhances OSINT analysis. By processing massive volumes of open-source data, NLP can uncover patterns that might escape human detection, such as unusual login attempts or phishing strategies hidden in communication data. Despite these benefits, 38% of enterprise leaders currently lack trust in AI vendor security, even though 81% feel competitive pressure to adopt AI tools.

Parsing Unstructured Data for Insights

The first step in NLP workflows is segmenting documents into machine-readable chunks – like text blocks, headings, lists, or code snippets – while keeping their original context intact. This segmentation allows LLMs to focus on specific sections and identify behaviors that align with known techniques, converting them into structured formats for further analysis.

NLP goes beyond basic keyword detection. Using RAG, these systems extract detailed metadata and map behaviors to the MITRE ATT&CK framework, assigning precise technique identifiers.

The final step, gap analysis, ensures comprehensive threat coverage. By comparing extracted data against existing detection catalogs, NLP systems use vector similarity searches and LLM validation to identify areas that are well-covered versus those that need attention. This involves standardizing metadata and code into relational databases and applying algorithms to generate confidence scores for threat matching.

To ensure accuracy, security teams should include human oversight during the final review of TTP lists and coverage conclusions. This step helps mitigate the risk of missing critical details in lengthy documents. Jack Pittas, Co-founder and President of PK Cyber Solutions Inc., highlights the value of AI in such scenarios:

"AI can help triage alerts, prioritize incidents, and predict likely attack paths, particularly in environments where the volume of signals is overwhelming."

By bridging behavioral analytics with textual intelligence, NLP enhances the overall effectiveness of threat detection.

Using The Security Bulldog for OSINT

The Security Bulldog is a platform that applies NLP specifically to OSINT. Its proprietary engine processes cyber intelligence from sources like MITRE ATT&CK, CVE databases, security podcasts, and news feeds. Future integrations aim to include data from STIG, Twitter, the Dark Web, and SBOM repositories.

The platform’s semantic analysis allows security teams to investigate incidents using natural language queries instead of complicated search commands. By analyzing vast amounts of threat data in real-time, The Security Bulldog identifies emerging zero-day vulnerabilities faster than manual methods. It operates 24/7, providing enriched insights from multiple OSINT sources to help teams respond more effectively.

Customizable feeds let teams focus on threats relevant to their specific IT environments. Additionally, seamless integration with existing SOAR and SIEM tools ensures that NLP-derived insights flow directly into current workflows, speeding up detection and response without requiring teams to overhaul their systems.

Predictive Analytics and Deep Learning for Trend Forecasting

Building on anomaly detection and insights from NLP, predictive analytics takes security a step further by forecasting potential attack trends. While NLP focuses on extracting intelligence from text, predictive analytics and deep learning analyze datasets such as traffic logs, endpoint events, and Indicators of Compromise (IoCs) to uncover patterns that traditional systems might miss. Instead of simply reacting to known threats, predictive AI empowers teams to shift from reactive cleanup to proactive anticipation.

Deep learning models are particularly effective for analyzing sequences where timing and order play a critical role. This makes them well-suited for identifying multi-stage attacks – like those involving reconnaissance, lateral movement, and data exfiltration – that unfold over weeks or months. Using time-series forecasting, these models detect subtle changes and anomaly clusters that often precede an incident. As Cyble Editorial highlights:

"Predictive threat intelligence moves teams from reaction to anticipation."

By spotting patterns during the early reconnaissance phase, predictive models enable organizations to neutralize threats before they escalate. For example, a major financial institution reduced fraudulent activities by 40% within six months by leveraging predictive analytics to analyze transaction patterns. Similarly, e-commerce platforms have successfully blocked over 90% of malicious bot traffic using these tools.

Building Predictive Models for Threat Trends

The first step in creating predictive models is data ingestion and preprocessing. Security teams collect data from sources like firewall logs, endpoint events, and external cyber threat intelligence (CTI) feeds. Through feature engineering, they identify key indicators – such as login frequency, device location, and IP reputation – and establish behavioral baselines for time-series forecasting.

Once baselines are in place, time-series forecasting helps identify anomaly clusters that signal potential attack chains across different environments. This approach is especially important given the "280-day breach clock", during which attackers often remain undetected while conducting reconnaissance and staging data.

Predictive models go beyond flagging anomalies – they correlate historical data with global threat trends to predict likely attack paths, including ransomware or Advanced Persistent Threat (APT) campaigns. As Palo Alto Networks explains:

"AI helps with zero-day attacks by using anomaly detection and behavioral analytics. Since zero-day attacks are previously unknown, signature-based systems cannot detect them."

To ensure these models are accurate, organizations must prioritize data quality by using diverse, clean, and well-balanced datasets. Incorporating Explainable AI (XAI) techniques, like SHAP (Shapley Additive Explanations), also helps analysts understand which factors influenced a predictive alert, making it easier to validate results.

Continuous Learning for Improved Detection

To stay ahead of evolving threats like adaptive malware and AI-driven phishing, deep learning models must continuously improve. Continuous learning addresses "model drift", which occurs when real-world data – such as new malware variants – diverges from the model’s original training data, reducing its accuracy.

Feedback loops play a critical role in this process. When human analysts review false positives or missed threats, the system uses this feedback to retrain and enhance its future performance. Modern AI platforms are designed to process millions of events per second, identifying correlations that would be impossible for humans to catch manually.

"The volume and velocity of modern threats exceed human processing capacity. That doesn’t diminish human expertise. It makes it more valuable – and more strategic."

Organizations that adopt AI-driven solutions often see a return on investment within 4 to 12 months, with break-even volumes typically around 50,000 interactions annually. By enabling teams to anticipate future threats, predictive analytics completes the cycle of AI-driven security, moving from detection to proactive defense.

Data Correlation and Automated Responses with AI

AI takes threat detection to the next level by piecing together clues from various data sources. Cyberattacks often involve multiple, seemingly unrelated events, and AI shines at connecting these dots. It collects and analyzes telemetry from firewalls, endpoints, network traffic, cloud platforms, and external threat feeds to uncover the bigger picture of an attack. For instance, a login from an unfamiliar device might raise no alarms on its own. But when AI links it to a late-night file transfer, it flags the activity as a high-priority threat.

This ability to provide a comprehensive view of potential threats is what security experts call attack surface visibility. It allows teams to see adversary behavior across all tools and techniques. Considering that Security Operation Centers (SOCs) handle an overwhelming average of 4,484 alerts daily, with analysts spending up to three hours a day sorting through them, AI proves invaluable. With a 99% accuracy rate in incident formation, AI-powered tools save an estimated 7.2 million analyst hours annually, translating to approximately $241 million in savings. Pushpendra Mishra from Seceon highlights this efficiency:

"Automated incident response has emerged as a game-changer, enabling organizations to quickly contain, mitigate, and remediate cyber threats with minimal human intervention."

Correlating Multi-Source Data for Attack Detection

AI works by creating behavioral baselines and identifying deviations that suggest malicious activity. This is especially effective against Indicators of Attack (IOAs), which focus on the intent and sequence of actions rather than static markers like IP addresses or file hashes.

However, false correlations can disrupt operations if not managed carefully. To address this, Microsoft’s security team recommends three key practices: keeping detectors below noise thresholds, avoiding correlations based on overly generic data, and limiting the number of entities linked to individual alerts. As Scott Freitas from Microsoft warns:

"False correlations pose a significant risk and can lead to unwarranted actions on benign devices or users, disrupting vital company operations."

Organizations that heavily integrate AI into their security systems report an average savings of $1.9 million compared to those that don’t. They also achieve a 98% detection rate and reduce incident response times by 70% in high-risk situations. This reliable data correlation sets the stage for automated response systems.

Automating Responses with AI-Driven Platforms

Once AI detects a coordinated attack, it can integrate with SOAR (Security Orchestration, Automation, and Response) tools to take immediate action. These tools can isolate compromised endpoints, block malicious IPs, or disable accounts within seconds. This speed is critical, as manual efforts often take hours or even days, giving attackers a dangerous window to escalate their activities.

Platforms like The Security Bulldog use proprietary NLP engines to feed structured threat intelligence into SOAR systems. By distilling open-source cyber intelligence and syncing with existing tools, it empowers teams to automate containment measures while keeping human analysts involved for complex decisions.

Ninety-five percent of users report that AI-powered cybersecurity improves both detection and response speeds. To get the most out of these systems, security teams should start small – automating responses to low-risk, high-volume alerts – before moving on to more critical, autonomous actions. Establishing feedback loops where analysts refine AI models over time ensures that the system evolves, becoming better at distinguishing between legitimate changes and actual threats.

Conclusion

AI is reshaping how we approach threat detection and response. By combining machine learning, natural language processing (NLP), and predictive analytics, security teams can identify potential threats earlier and more effectively. Predictive analytics, in particular, helps shift the focus from reacting to incidents after they occur to proactively identifying threats during the reconnaissance stage. As Cyble Editorial puts it:

"Prediction isn’t just faster detection – it’s a different mindset."

AI-driven platforms have the ability to process and correlate millions of events per second across endpoints, cloud environments, and network traffic. This capability is essential as cybercriminals increasingly leverage generative AI for automated reconnaissance and adaptive malware development. The collaborative "Agentic SOC" model exemplifies this future, where AI handles massive data correlation while human analysts contribute strategic insights. Such integration ensures that these advanced technologies fit seamlessly into existing security workflows.

Platforms like The Security Bulldog simplify the extraction of actionable intelligence, allowing teams to move away from time-consuming log analysis and focus instead on strategic threat hunting.

However, automation alone isn’t enough. Effective threat detection still requires human oversight. While AI can provide initial insights, expert analysts play a critical role in validating and refining these outputs. Incorporating human-in-the-loop validation ensures that AI systems remain accurate and adaptable. Regular retraining with updated threat data is also vital to keep AI tools aligned with the ever-changing tactics of attackers.

As regulatory demands grow and adversaries become more sophisticated, adopting AI-powered tools is no longer optional – it’s a necessity. Together, these advancements highlight that integrating AI into cybersecurity is not just an improvement but an essential step forward.

FAQs

How much data does AI need to learn “normal” behavior?

AI systems typically need to process substantial amounts of data over a period of time to determine what qualifies as "normal" behavior. The quality of their learning often hinges on datasets that capture a wide range of user actions and network patterns. This variety helps the AI pinpoint trends and detect anomalies with greater precision.

How can teams reduce false positives from anomaly detection?

Teams can cut down on false positives by leveraging AI-driven models that continuously learn and adjust to evolving environments. Unlike rigid, rule-based systems, AI examines behavioral patterns over time, helping to distinguish harmless anomalies from real threats. By integrating threat intelligence and contextual insights, AI can prioritize alerts based on risk levels, automate data correlation, and boost detection precision. This allows cybersecurity teams to concentrate on actual threats, reducing alert fatigue and making their operations more efficient.

What’s the safest way to automate AI-driven incident response?

The best way to automate AI-driven incident response is by using dynamic, context-aware systems that can adjust to changing threats. These systems help cut down on alert fatigue, focus on genuine risks, and handle routine tasks automatically, which reduces the likelihood of human mistakes. To keep things secure, it’s essential to make sure AI systems are transparent, closely monitored, and include human oversight for critical decisions. This ensures a balance between speed, reliability, and maintaining control.