Python Libraries for Web Scraping in OSINT

Web scraping is a key part of OSINT (Open Source Intelligence) workflows, especially when APIs or datasets aren’t available. Python’s simplicity and wide range of libraries make it a top choice for gathering data from websites, social media, and databases. Here’s a quick guide to the most useful Python libraries for web scraping in OSINT:

- BeautifulSoup: Extracts specific elements from HTML or XML, ideal for precise tasks like pulling metadata or contact details.

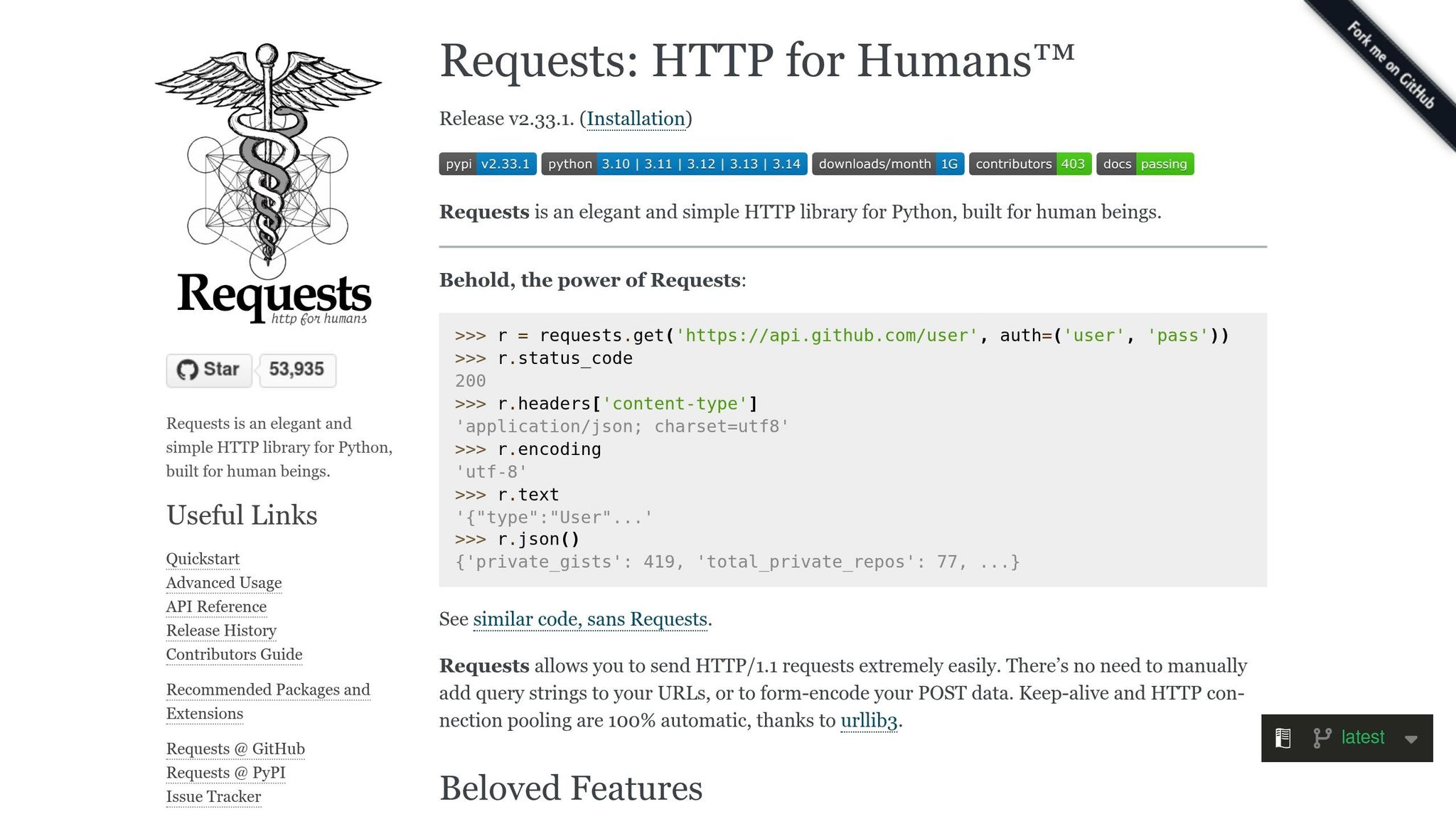

- Requests: Fetches web content and handles HTTP requests, cookies, and authentication for APIs.

- Scrapy: A complete web crawling framework for large-scale data collection, managing concurrent requests efficiently.

- Snscrape: Scrapes social media platforms like Twitter and Reddit without needing API keys.

- SpiderFoot: Automates OSINT tasks with over 200 modules for scanning DNS records, IPs, and domains.

- Shodan/Censys: Maps internet infrastructure using pre-indexed data from scanning databases.

- Stem: Enables anonymous access to dark web resources via the Tor network.

These tools allow OSINT professionals to extract data efficiently, even from complex or JavaScript-heavy websites. Ethical considerations, like respecting rate limits and legal guidelines, are essential when using these libraries. Combining multiple tools can further enhance workflows, enabling tasks like bypassing anti-bot measures or handling CAPTCHAs. Python’s ecosystem continues to be a vital resource for OSINT investigations.

OSINT Scraping with Python – Ryan Hays – PSW #656

sbb-itb-9b7603c

Python Libraries for Web Scraping in OSINT

The Python ecosystem is packed with tools tailored for OSINT (Open Source Intelligence) practitioners. From simple parsers to comprehensive automation frameworks, these libraries can handle everything from extracting structured data on static pages to navigating complex, JavaScript-heavy websites. Choosing the right tool can save time while ensuring accurate, high-quality data collection. Here’s a breakdown of some key libraries and their roles in OSINT workflows.

BeautifulSoup

BeautifulSoup is a go-to library for extracting specific elements from HTML and XML. Whether you’re pulling contact details from directories or metadata from forum posts, it simplifies the process by converting web content into a navigable tree structure. It supports three parsing options:

- html.parser: Built into Python, it’s fast and doesn’t require extra dependencies.

- lxml: The fastest option but relies on an external C library.

- html5lib: Mimics browser behavior for accurate parsing but is slower.

BeautifulSoup’s strength lies in its simplicity. You can target elements using tags, CSS selectors, or custom attributes, making it ideal for tasks that require precision without complexity.

Requests

Requests handles the foundational task of fetching web content. Whether you’re retrieving raw HTML, managing cookies, or handling authentication for APIs, it’s an essential tool for the initial stages of OSINT workflows. Its clean syntax makes it easy to use for tasks like submitting forms or testing endpoints, making it a favorite for quick and efficient data retrieval.

Scrapy

Scrapy is more than just a library – it’s a complete web crawling framework. With over 55,100 stars on GitHub, it’s designed for large-scale projects, managing tasks like concurrent requests and crawl queues effortlessly. It even allows you to pause and resume jobs, which is invaluable for complex investigations.

An interactive shell lets you test scraping logic in real time, streamlining the development process. As Pierluigi Vinciguerra, Co-Founder and CTO at Databoutique.com, puts it:

"Scrapy is the cornerstone of web scraping with Python. Without it, scraping would be much harder."

For OSINT pros dealing with thousands of domains, Scrapy is a must-have.

Snscrape

Social media platforms often restrict API access, but Snscrape sidesteps these limitations. It allows you to scrape data from platforms like Twitter (X), Reddit, Instagram, and Telegram without needing API keys. This makes it a powerful tool for gathering posts, profiles, and engagement metrics while avoiding authentication hurdles.

For tasks like monitoring disinformation campaigns or tracking threat actors across multiple platforms, Snscrape provides a consistent, API-free solution.

SpiderFoot

SpiderFoot is an automation powerhouse, offering over 200 modules to scan DNS records, IP addresses, domains, and even dark web sources. It excels at correlating data from multiple databases, making it particularly effective for mapping digital footprints or uncovering infrastructure relationships.

What sets SpiderFoot apart is its passive intelligence-gathering ability. By avoiding direct contact with target servers, it minimizes the risk of detection during investigations.

Shodan and Censys Python Libraries

For infrastructure mapping, Shodan and Censys are invaluable. These libraries provide programmatic access to extensive internet-wide scanning databases, offering insights into open ports, device banners, and SSL certificates.

Unlike traditional scrapers, these tools rely on pre-indexed data, which means you’re not directly interacting with servers. Both offer free tiers, but advanced features require paid API keys. They’re particularly useful for uncovering IoT device configurations or ASN details that standard web scrapers can’t easily identify.

Stem

Stem is the go-to library for interacting with the Tor network. It enables anonymous access to .onion sites and other dark web resources, a critical feature for OSINT tasks involving underground forums or leaked data marketplaces.

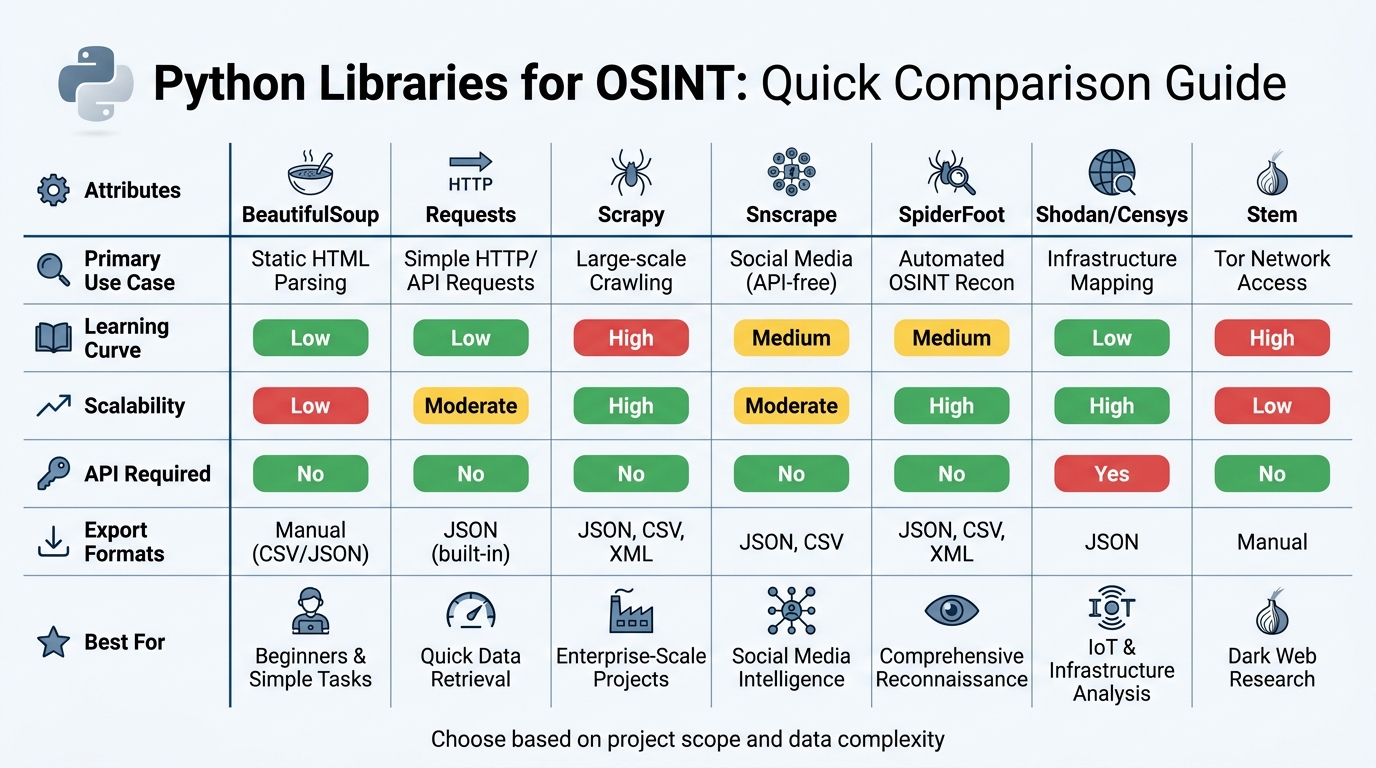

Library Comparison

Python OSINT Libraries Comparison: Features, Scalability, and Use Cases

When it comes to Python libraries for OSINT (Open Source Intelligence), each tool brings its own strengths to the table. Choosing the right one depends on what you’re trying to achieve – whether it’s parsing simple HTML or conducting large-scale web crawling. Your decision should also account for the scope of your project and the type of data you need to collect.

For beginners, Requests and BeautifulSoup are a great starting point. These tools are widely used, with Requests clocking in at around 128.3 million weekly downloads. On the other hand, if you’re dealing with massive amounts of data, Scrapy is a better fit. As Grzegorz Piwowarek, an Independent Consultant, explains:

"Scrapy is asynchronous and built for scale, making it suitable for scraping thousands or even millions of pages".

To make it easier to choose, here’s a quick comparison of some popular libraries:

Comparison Table

| Library | Primary Use Case | Learning Curve | Scalability | API Required | Export Formats |

|---|---|---|---|---|---|

| BeautifulSoup | Static HTML Parsing | Low | Low | No | Manual (CSV/JSON) |

| Requests | Simple HTTP/API Requests | Low | Moderate | No | JSON (built-in) |

| Scrapy | Large-scale Crawling | High | High | No | JSON, CSV, XML |

| Snscrape | Social Media (API-free) | Medium | Moderate | No | JSON, CSV |

| SpiderFoot | Automated OSINT Recon | Medium | High | No | JSON, CSV, XML |

| Shodan/Censys | Infrastructure Mapping | Low | High | Yes | JSON |

| Stem | Tor Network Access | High | Low | No | Manual |

For tasks requiring anonymity or bypassing anti-bot measures, tools like curl_cffi are becoming increasingly important. This tool, for instance, can spoof TLS fingerprints, helping users navigate the growing sophistication of website detection systems. In today’s OSINT landscape, balancing stealth and scalability is more critical than ever.

Best Practices for Web Scraping in OSINT

When using web scraping for OSINT, it’s not just about the tools – it’s about following ethical guidelines and ensuring efficient, secure workflows.

Ethical Considerations

Web scraping in OSINT demands a clear commitment to legal and ethical standards. Start by checking the target site’s robots.txt file to understand which areas are off-limits to web crawlers. Avoid bypassing access controls like paywalls or subscription barriers, as doing so may violate laws like the Computer Fraud and Abuse Act (CFAA) in the U.S. and similar regulations worldwide. Even for publicly available data, handling personally identifiable information (PII) requires caution. Laws like GDPR and CCPA mandate a legitimate legal basis for data collection and secure storage practices.

When it comes to scraping frequency, respect rate limits. For smaller websites, aim for one request every 3-5 seconds, while larger platforms can typically handle 1-2 requests per second. As Data Scientist Vinod Chugani aptly states:

"Ethical scraping is as much about restraint as it is about reach".

Transparency is also key. Use an honest User-Agent string that includes contact details, allowing site administrators to contact you if needed. If your project involves sensitive data, prioritize operational security. Tools like the Stem library for Tor routing, VPNs, and dedicated virtual machines can help protect your identity. Finally, maintain detailed logs of your scraping activities to demonstrate that you only accessed publicly available data.

With these ethical and security measures in place, combining multiple libraries can further streamline and enhance OSINT workflows.

Combining Multiple Libraries

The real power of OSINT scraping lies in combining the strengths of various tools. By integrating libraries like Requests, BeautifulSoup, and Scrapy, you can create a workflow that adapts to different challenges. For example, use Requests or HTTPX for fast HTTP requests, BeautifulSoup or lxml for parsing data, and a headless browser like Playwright or Selenium when JavaScript rendering is necessary. This modular approach ensures you’re using the right tool for the task at hand.

Session management becomes more efficient with tools like requests.Session(), which helps maintain authentication tokens and headers across multiple requests. For sites requiring login credentials, extract CSRF tokens from initial responses using BeautifulSoup and include them in your subsequent requests. Tools like MechanicalSoup simplify handling complex forms by automating submissions.

To avoid detection, take extra precautions. Replace standard Selenium implementations with undetected-chromedriver to hide common automation fingerprints like the navigator.webdriver flag. For handling sites with Cloudflare’s "Under Attack" mode, integrate Cloudscraper to bypass JavaScript challenges. When faced with CAPTCHAs, services like 2Captcha offer solutions at minimal cost, supporting tasks ranging from simple image CAPTCHAs to more complex reCAPTCHAs.

For larger-scale projects, consider using Scrapy as the core framework. Integrate tools like Playwright or Selenium via middleware to handle pages requiring JavaScript execution, saving resources in the process. Expand your capabilities by incorporating specialized modules like ExifRead for extracting image metadata, IPwhois for network information, and phonenumbers for identifying carrier details. To keep your environment clean and avoid dependency conflicts, always work within virtual environments using venv or Anaconda.

Conclusion

Python libraries have revolutionized OSINT workflows by automating data collection and turning raw web data into actionable insights.

Requests simplifies data acquisition by offering an API that handles HTTP requests with ease. BeautifulSoup makes parsing messy HTML structures more efficient, saving hours of manual work. For larger-scale operations, Scrapy shines with its asynchronous crawling capabilities, managing thousands of concurrent requests seamlessly. Additionally, specialized tools for JavaScript-heavy websites enable data extraction through simulated user interactions, ensuring no information is left behind.

When combined into automated pipelines, these tools help structure data for instant analysis. As Emma Foster, a Machine Learning Engineer, explains:

"Python’s dominance in web scraping isn’t accidental… Its clear syntax makes it relatively easy to learn and write, even for those new to programming".

This seamless integration not only enhances technical capabilities but also addresses the increasing demand for efficient data solutions. With the global data analytics market expected to hit $655.8 billion by 2029, growing at a 12.9% CAGR, the importance of effective data collection cannot be overstated. By leveraging these libraries, OSINT professionals can identify threats more quickly and make better-informed decisions.

At the same time, adhering to ethical standards is essential when designing these workflows. Selecting the right tools for each task while following best practices ensures that OSINT workflows remain both effective and responsible.

FAQs

When should I use Scrapy instead of Requests and BeautifulSoup?

Scrapy is a great choice for large-scale web scraping projects where scalability, performance, and advanced features are a priority. It shines in scenarios requiring automatic crawling, efficient request scheduling, or robust data pipelines. If your project involves managing multiple requests or handling pagination seamlessly, Scrapy is built to handle that workload effectively.

On the other hand, Requests and BeautifulSoup are better suited for smaller, straightforward tasks. They work well for fetching individual pages or interacting with APIs, especially when dealing with static content. These tools offer fine-grained control over HTTP requests, making them ideal for simpler, more focused scraping needs.

How do I scrape JavaScript-heavy pages without getting blocked?

To extract data from JavaScript-heavy pages without triggering blocks, tools like Selenium with WebDriver or Playwright for Python are excellent options. These tools can render JavaScript and mimic real user interactions, making them ideal for such tasks. Additionally, libraries such as Pydoll and Scrapling are designed to handle anti-bot mechanisms effectively.

For extra security, consider using headless browsers paired with rotating IP addresses and dynamic user-agent strings. This approach helps reduce the chances of detection while scraping.

What legal and ethical rules should I follow when scraping for OSINT?

When gathering OSINT, it’s crucial to stick to legal and ethical practices to avoid potential problems. Legally, make sure to respect website terms of service and adhere to the rules outlined in robots.txt files. Ethically, avoid overwhelming servers with too many requests, as this can disrupt their functionality. Be clear about your purpose, handle the data responsibly, and stay informed about current regulations and best practices. Following these steps helps minimize risks and ensures responsible data collection.